News

Estimating the efficiency of a ride hailing service (Mobility Services)

In the first phase of the project algorithms to analyze the efficiency of a city for establishing a ride hailing or pooling use case have been developed. Recently, parallelization has been implemented to split up parts of the algorithm that can run in parallel as well as the usage of libraries able to use the HPC capabilities of the Evolve cluster. The remaining project time will be used to deploy the improved algorithms to the Evolve platform, to feed in the needed data in an efficient manner and to evaluate different platform usage strategies and analyze their increase of performance.

Objective

In the process of establishing ride hailing and pooling services, BMW has run different pilots in various cities. One of the main findings was, that the efficiency of the services depends very much on the characteristics of each city. Hence, prior to a rollout of a service an algorithm shall give an indication of an efficient setting for the service. This algorithm can also be used to find cities that are of special interest for new pilots.

Algorithm

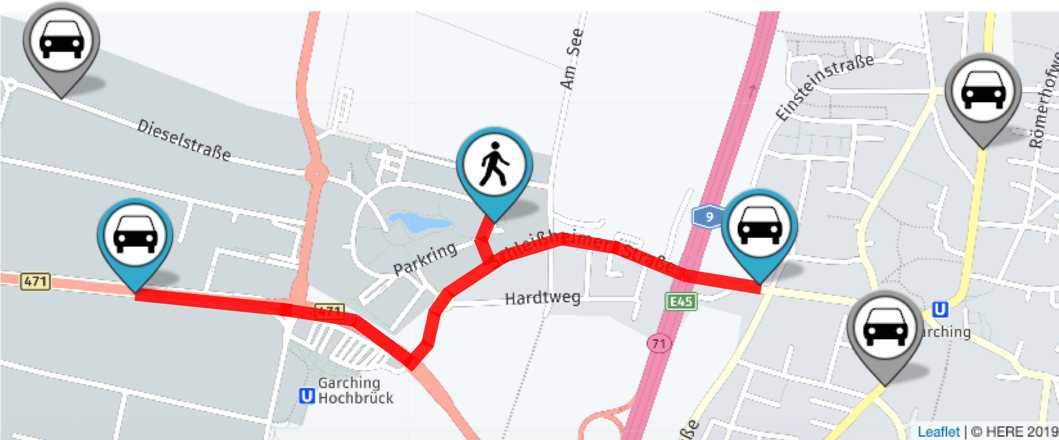

When evaluating a city there are various inputs. A demand data set specifies when and where customers are requesting rides to which destinations. This dataset can be stochastically generated based on the general land use. Further, historical demand data can be integrated if available for a city. Another static input for the algorithm is the road network on which the rides are performed. This input is given by a digital map of the city.

Besides these static input parameters, several dynamic parameters can specify the setting of the service. At minimum the size of the fleet can be changed.

With these given parameters the algorithm performs a simulation for the service in the specific city. Therefore, the behavior is simulated in time steps. The algorithm is able to both respond to customers within a few moments as well as optimizing car-passenger assignments periodically in order to find better matches. Every time a user sends a new trip request to the service provider, a simple Nearest-Neighbor heuristic decides whether or not this request can be accepted. It also provides a time window for the pickup of the user based on the time the closest available vehicle is able to arrive at the pickup location.

After a predefined period of time a global optimization is triggered. This optimization makes the final decision which vehicle of the fleet serves which of the users that sent a trip request since the last optimization and are not picked up yet.

After the simulation has run, performance indicators are calculated, like the mean waiting time, the requests that could be served or the occupation rate of vehicles. Based on these indicators an optimal fleet size for a city can be estimated.

Parallelization

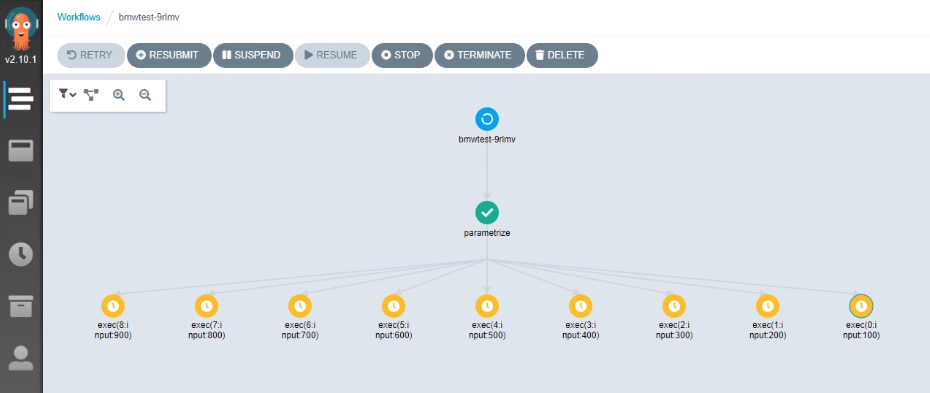

The algorithm can be parallelized in different manors. The obvious part, is the parallelization of different scenarios, like different fleet sizes. As the simulation can be run independently for each parameter set, a horizontal scaling of the problem is possible.

To realize this, BMW uses a Zeppelin notebook to create the different parameter sets and then starts an Argo workflow where all simulations can be run in parallel. In this case the Evolve platform speeds up the calculation time, because a higher number of CPUs is available, than for single servers.

The simulation itself cannot be parallelized horizontally, as it has to be run sequentially. However, some parts of the algorithm can run in parallel, e.g. the search for the nearest neighbors. Also, by using libraries capable of high-performance computing (HPC), single calculations can benefit from the high-performance cluster of Evolve. The algorithm has been optimized to use these capabilities.

Ongoing work

In the first phase of the project the algorithms have been developed. Recently, parallelization has been implemented to split up parts of the algorithm that can run in parallel as well as the usage of libraries able to use the HPC capabilities of the Evolve cluster. These optimizations have so far only been tested locally and will now be applied to the Evolve platform. The remaining project time will be used to deploy the improved algorithm to the Evolve platform, to feed in the needed data in an efficient manner and to evaluate different platform usage strategies and analyze their increase of performance.